Crucial reveals how to overcome the top 5 server workload constraints

SYDNEY, 25 January 2017 – Server memory is a component that’s either sufficient or insufficient. If you have a sufficient amount of memory for your workloads, you likely don’t even think about RAM because other problems consume your attention. However, when you have insufficient memory, your servers and your organisation’s productivity slow to a crawl because DRAM feeds your CPUs. That’s why in a recent Spiceworks survey of over 350 IT decision-makers, 47% noted that they planned to add more server memory in the coming year, even though half of all their servers were already running at the maximum installed memory capacity.* These findings come as no surprise because of how memory helps overcome five of the most pressing workload constraints.

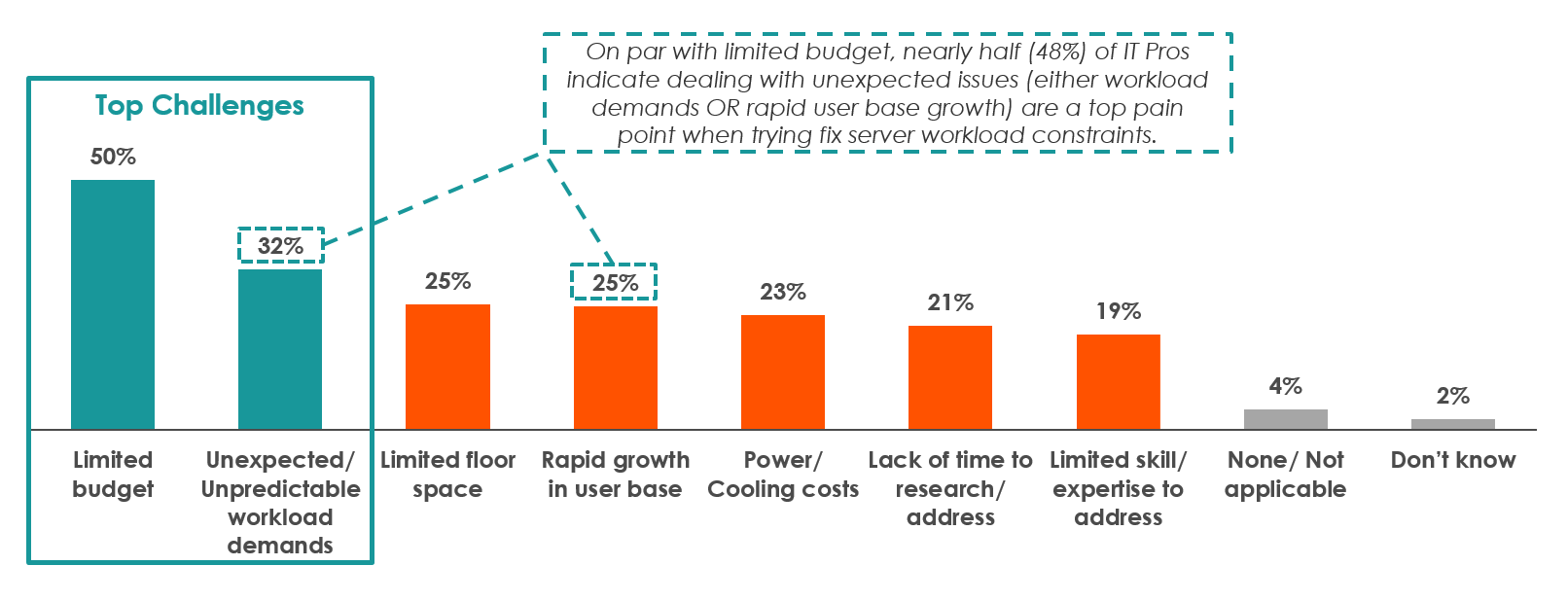

Michael Moreland, Crucial Server DRAM Product Marketing Manager said, “We partnered with and commissioned the Spiceworks survey to see and hear what challenges IT pros are experiencing in their data centres. Based on what they’ve said, it’s clear that they believe server memory is critical for improving system performance. Adding more memory to servers improves CPU performance and efficiency, which ultimately helps alleviate the top five workload constraints they mentioned: limited budget, unexpected or unpredictable workload demands, limited floor space, rapid growth in user base, and power or cooling costs.”

Spiceworks asked, “What are the top challenges you currently face in overcoming server workload constraints?” and respondents were able to select up to three answers.

The 353 respondents selected by Spiceworks were required to have purchase influence in their organisation and were required to have at least 30 physical servers and be using virtualisation software. Overall, 23 industries were represented (ranging from technology to energy to manufacturing) and 74% of respondents were running 100 or more physical servers, with 41% running over 200 boxes.

How memory helps get the most out of your IT budget

Moreland added, “When we surveyed the 350-plus IT managers from around the world, they listed a limited budget as their top workload constraint. Making the most of scarce resources is a hallmark of modern IT, and that’s why it’s critical to keep the total cost of ownership (TCO) down. That’s where adding server memory can help – maxing out a server’s memory provides fuel for the CPU to run optimally, allowing you to use fewer servers to accomplish more. With each server functioning more efficiently, it limits the power, cooling, and burdensome licensing costs that come with having more servers in your server room.”

Getting the most out of dwindling budgets often comes down to comparing acquisition cost to TCO. If you increase a server’s efficiency, you decrease its TCO because you’re getting more performance out of it over the same amount of time. Since memory is what feeds processing cores, it’s one of the most effective ways to make your CPUs more efficient and productive, allowing you to handle growing workloads without having to buy new servers.

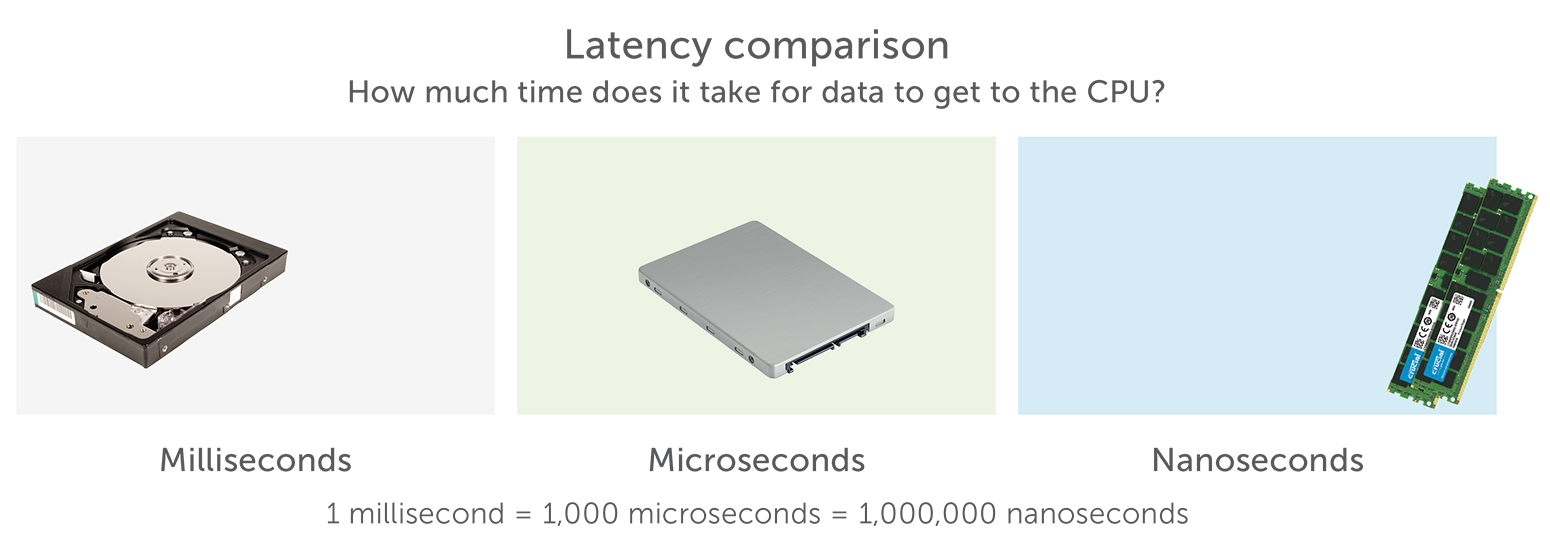

Specifically, more memory gives your system more of its fastest resourcelittle to no work. Since memory resides closer to the CPU, it takes less time for data to get from DRAM to the CPU than is does to go from storage to the CPU. For example, hard drives typically get data to the CPU in milliseconds, versus enterprise SSDs which get data to the CPU in microseconds. This is certainly a vast improvement, but it’s still a higher latency than DRAM, which is able to get data to the CPU in nanoseconds (with latency, lower is of course better). When you consider the millions of instructions that are fed to the processor each day, feeding data to the CPU via memory delivers a significant performance difference. Time is money, and more memory helps deliver the best possible return on your CPU investment.

How memory helps alleviate unexpected or unpredictable workload demands

Virtualised workloads are all about maintaining consistent quality of service (QoS) and eliminating on-again/off-again variance. Overall, more RAM helps eliminate service variance because it provides extra resources for virtualised applications to store and use active data (which lives in memory). Since unpredictable workload spikes quickly exhaust available memory, the system scrambles to find available resources, performance drops, and disk thrash is typically the result. More memory solves this problem by giving your applications more flexibility to meet rising and falling workloads that like to spike.

How memory helps maximise limited floor space

Think of floor space limits as a constructive problem to solve: What’s the minimum amount of servers you need to accomplish your workload? This kind of thinking helps lighten your enterprise footprint because every server that’s underutilised costs you more. For example, if you used 5 maxed-out servers to accomplish the workload of 10 half-full or old servers, you’d save on power, cooling, and software licenses – the big killer. When floor space is at a premium, there’s really only one thing to do: scale up. Scaling up almost always involves increasing a server’s installed memory capacity to get as much out of the box and feed as many VMs as possible.

Moreland continued, “Virtualised applications are heavily dependent on active data, and when there’s a spike in workload activity, available server memory resources are depleted and QoS drops. Filling your servers to their maximum RAM capacities reduces the strain on the systems when activity gets intense. Limited square footage in your server room places a premium on making the most of each individual server. When you don’t have the physical space to scale out, scaling up by maxing out the memory of each server can ultimately match the performance of multiple half-full ones. Fewer servers and equal performance equates to less TCO because your enterprise footprint is smaller and you don’t have to spend big on huge licensing costs.”

How memory helps maintain QoS in spite of rapid growth in user base

Hosting more users requires more memory to maintain the same QoS. By giving the system more RAM, you gain more flexibility and increase its ability to handle unpredictable workload demands caused by the sudden growth in your user base.

How memory helps reduce power or cooling costs

Although fully populating the memory in a server raises its total power consumption, the total consumed energy is often less than using multiple partially full servers to deliver a comparable level of performance. More DRAM helps your servers use power in the most efficient manner possible from a workload perspective (feeding and running the CPU). Plus, if you’re using fewer physical servers, your total power and cooling costs will likely be less.

The bottom line is that memory is like fuel for your CPUs – as long as they have enough of it, they’re OK. But there’s a significant difference between having enough RAM and truly improving workload efficiency. With just enough RAM, you’re certainly able to run applications, but with the maximum installed memory capacity, you’re often able to use fewer servers to get more done at a lower total TCO. Don’t starve your CPUs. Know your workload, and if it’s CPU or memory-dependent, improve efficiency for less with more RAM, not more servers.

Michael Moreland concluded, “Power and cooling costs are directly related to the number of servers in your deployment. Having more servers consumes more power and produces higher temperatures than a room with fewer servers – not to mention how much it raises your software license costs. In the case of servers, more isn’t always better, especially when they can be maxed-out with memory and meet or surpass the same level of performance of half-full servers. Focus on quality, not quantity, when it comes to your server deployment and reduce your power, cooling, and license costs.”

For more information on how to overcome the top 5 server workload constraints go to crucial.com

*Survey conducted by Spiceworks in 2016.